AI agents consume web content, synthesise it, and present conclusions to people who have no practical way to verify the underlying sources. The content those agents find was overwhelmingly created to be found, not to be right.

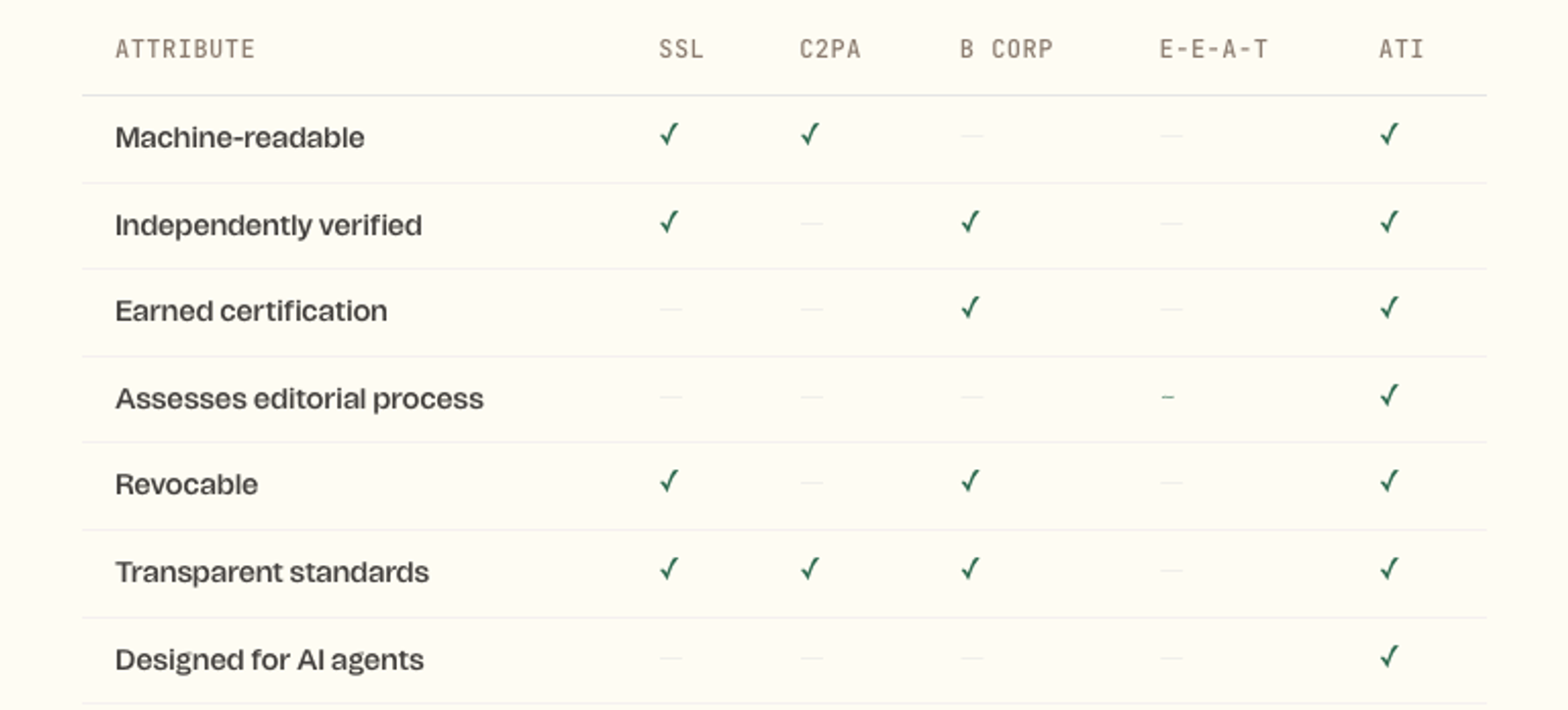

There is currently no standardised, earned, verifiable signal that tells an AI system — or a human — that a given source meets a minimum bar of trustworthiness. Schema markup tells you what a page claims to be. Backlinks tell you how popular it is. Neither tells you whether the content is accurate, accountable, or safe to reason from.

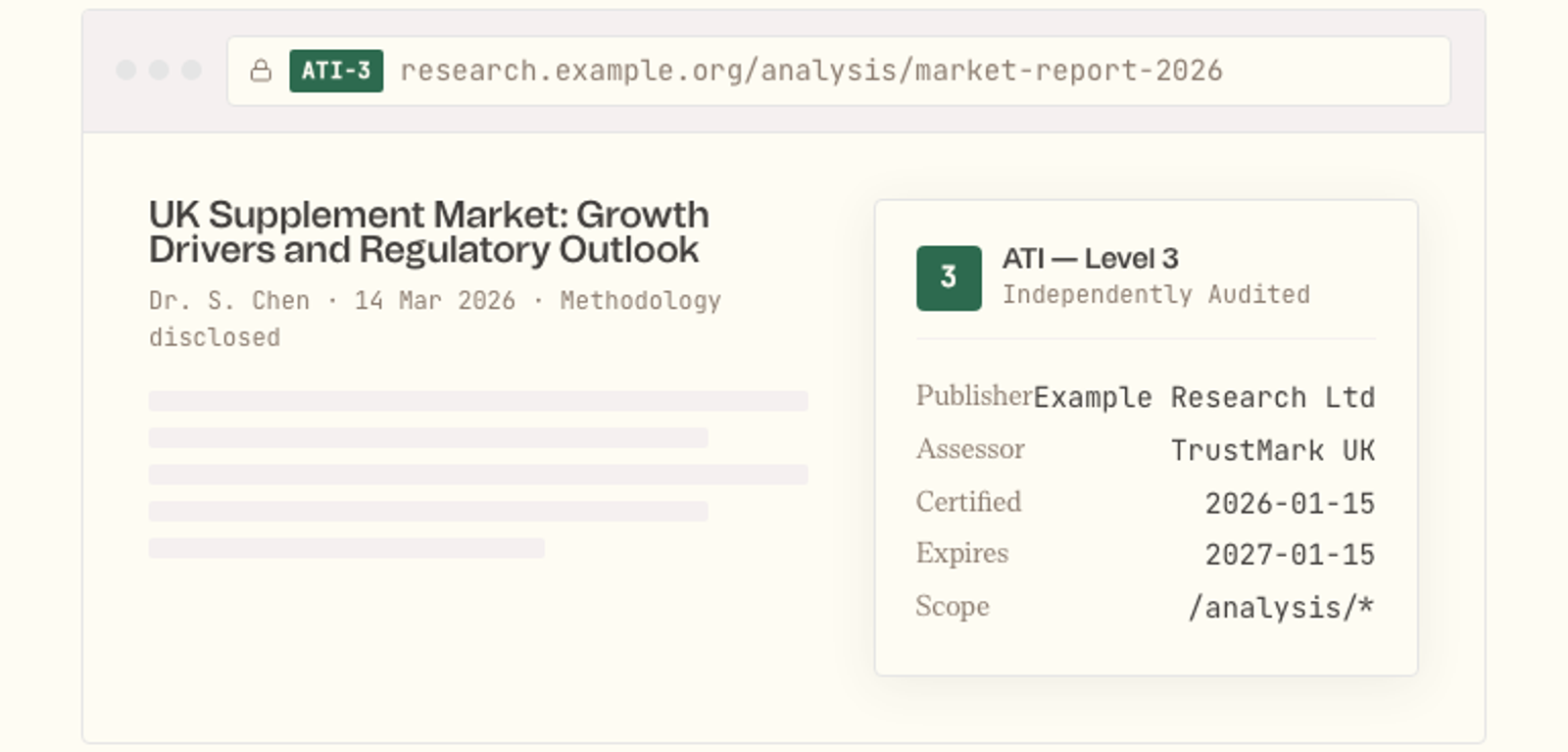

The Badge

A browser-level trust indicator — similar to the SSL padlock — that appears when you visit a certified source. Machine-readable for AI agents. Visible to humans.

Scoped certification. The badge applies to /analysis/* — the publisher’s research section. Marketing pages on the same domain are not covered. AI agents and browsers know the difference.

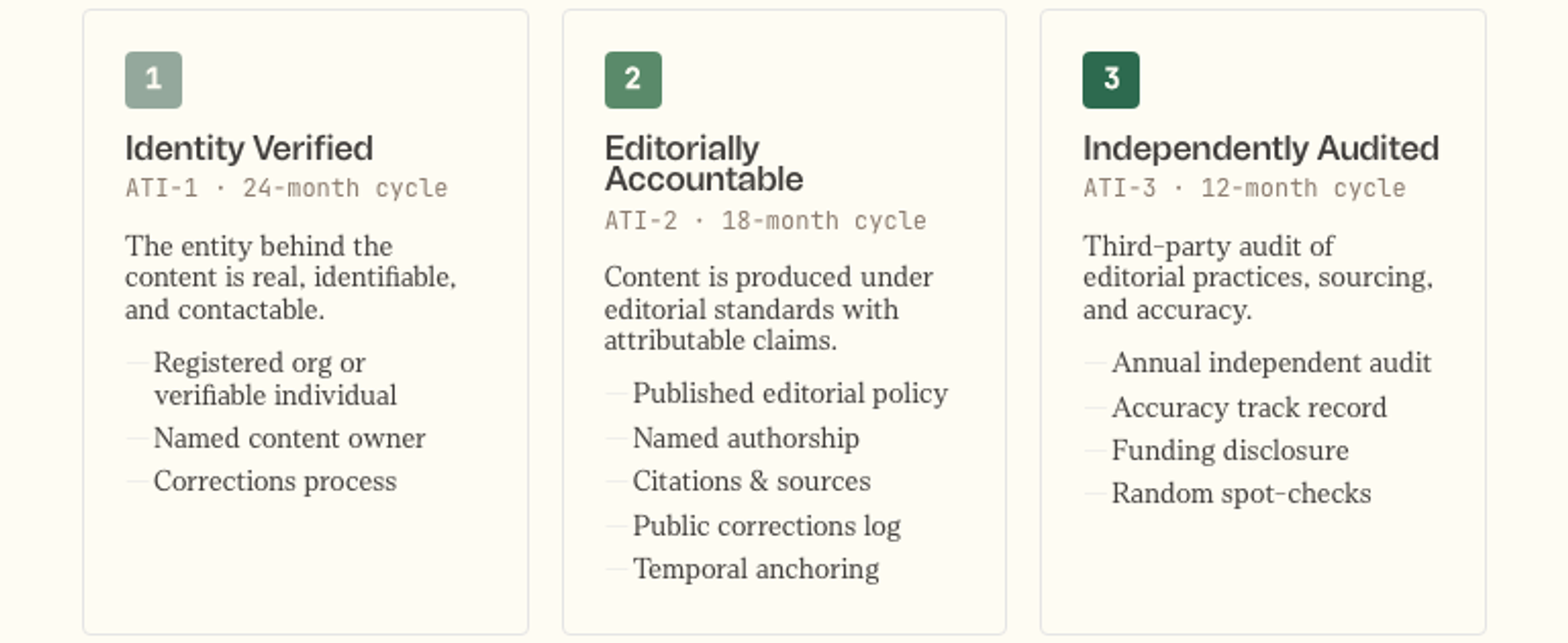

Certification Tiers

Three levels of earned certification, reflecting different levels of rigour. Each tier builds on the one below it.

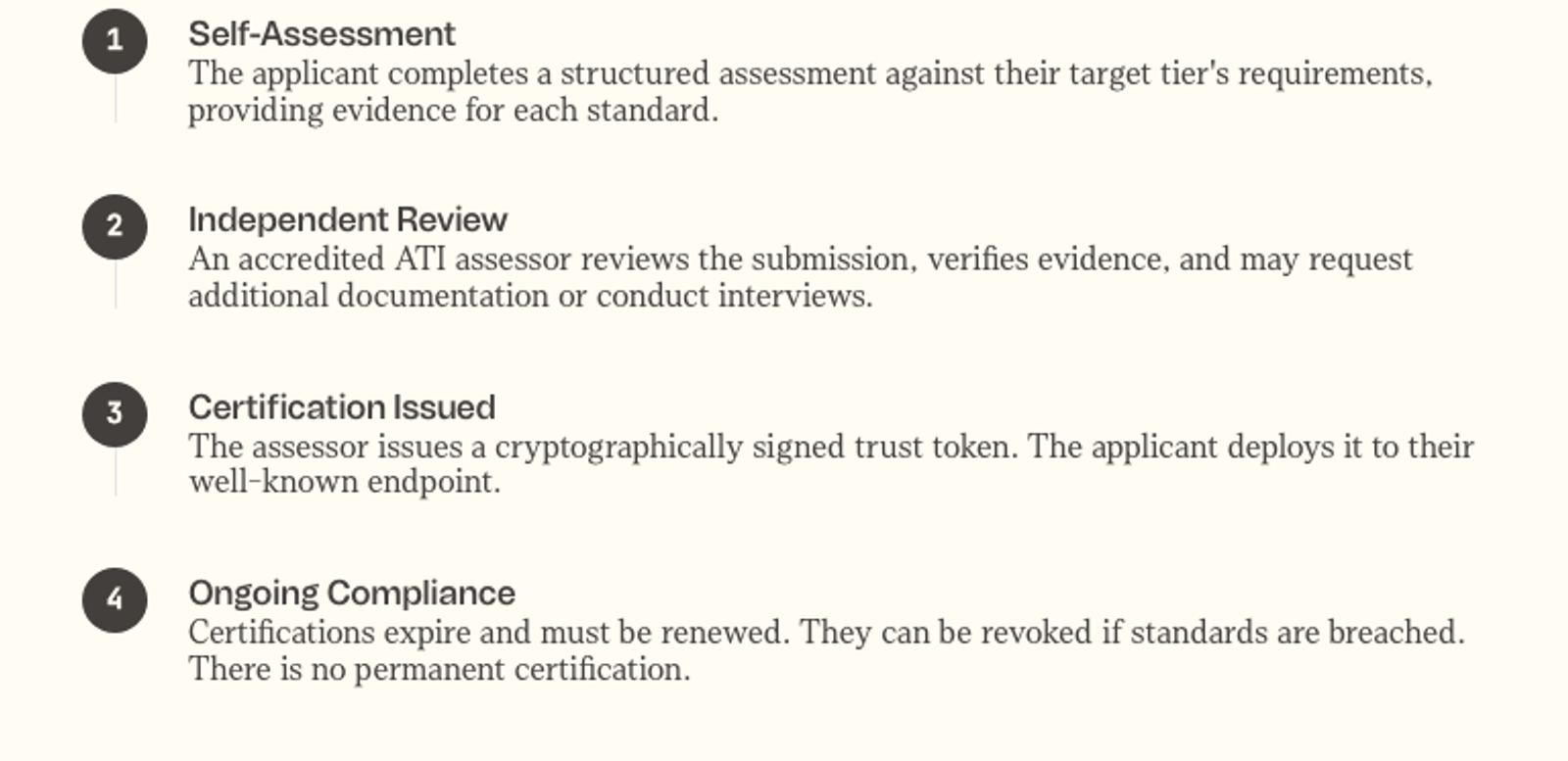

How Certification Works

The certification follows a four-step process: self-assessment against the target tier’s requirements, independent review by an accredited assessor, issuance of a cryptographically signed trust token, and ongoing compliance with renewal cycles and potential revocation.

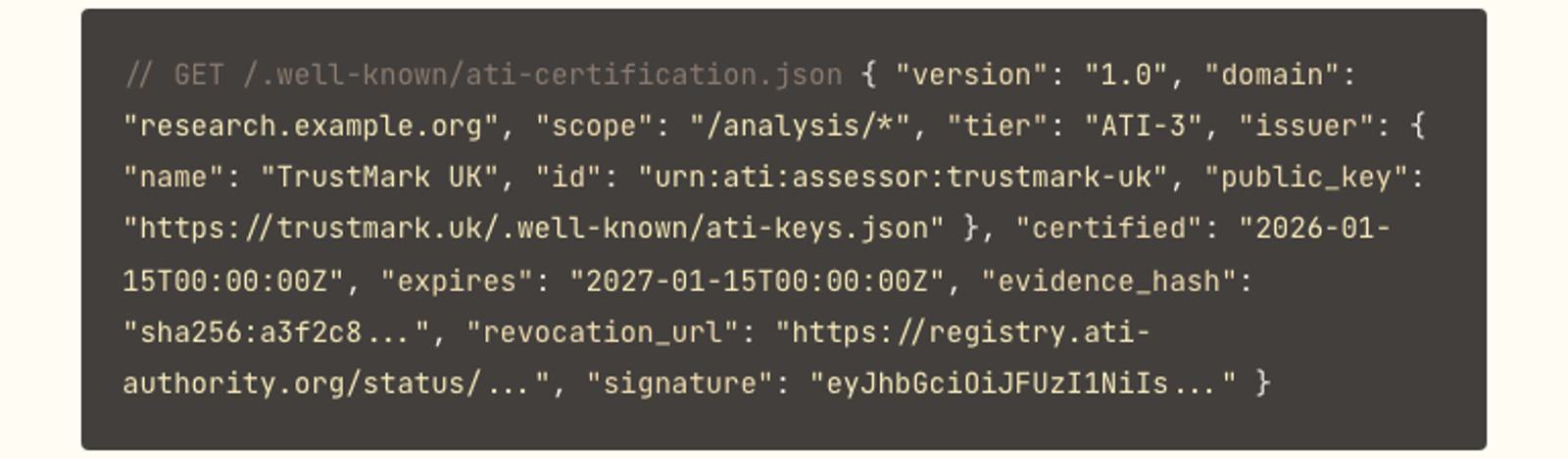

The Trust Token

The certification is expressed as a signed JSON Web Token served at a well-known endpoint. AI agents verify the token by checking the signature against the assessor’s public key and confirming the certificate hasn’t been revoked — exactly as browsers verify SSL certificates today.

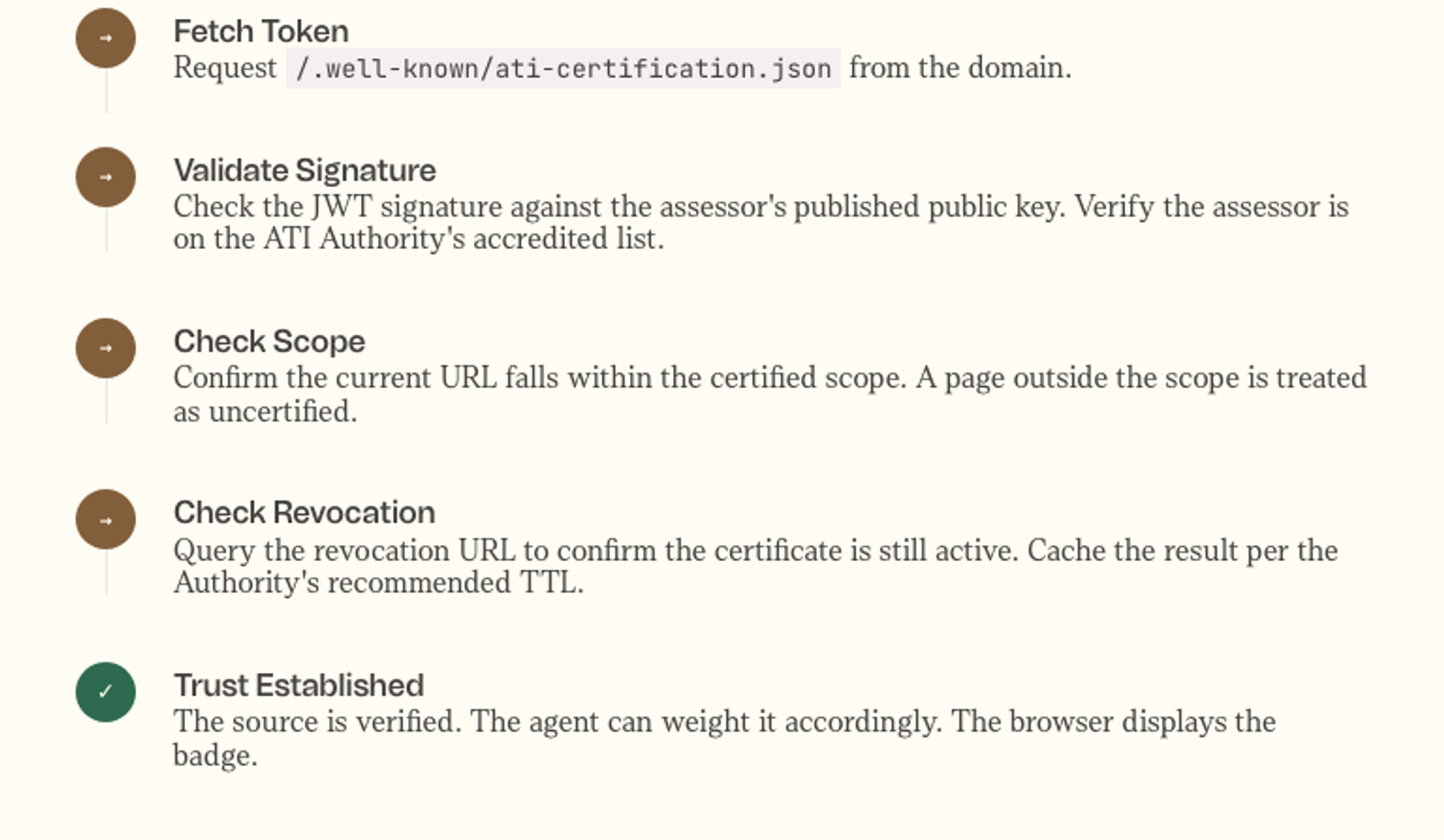

Verification Flow

An AI agent or browser encountering an ATI-certified page fetches the token, validates the signature against the assessor’s public key, confirms the URL falls within the certified scope, checks the revocation status, and — if all checks pass — treats the source as verified.

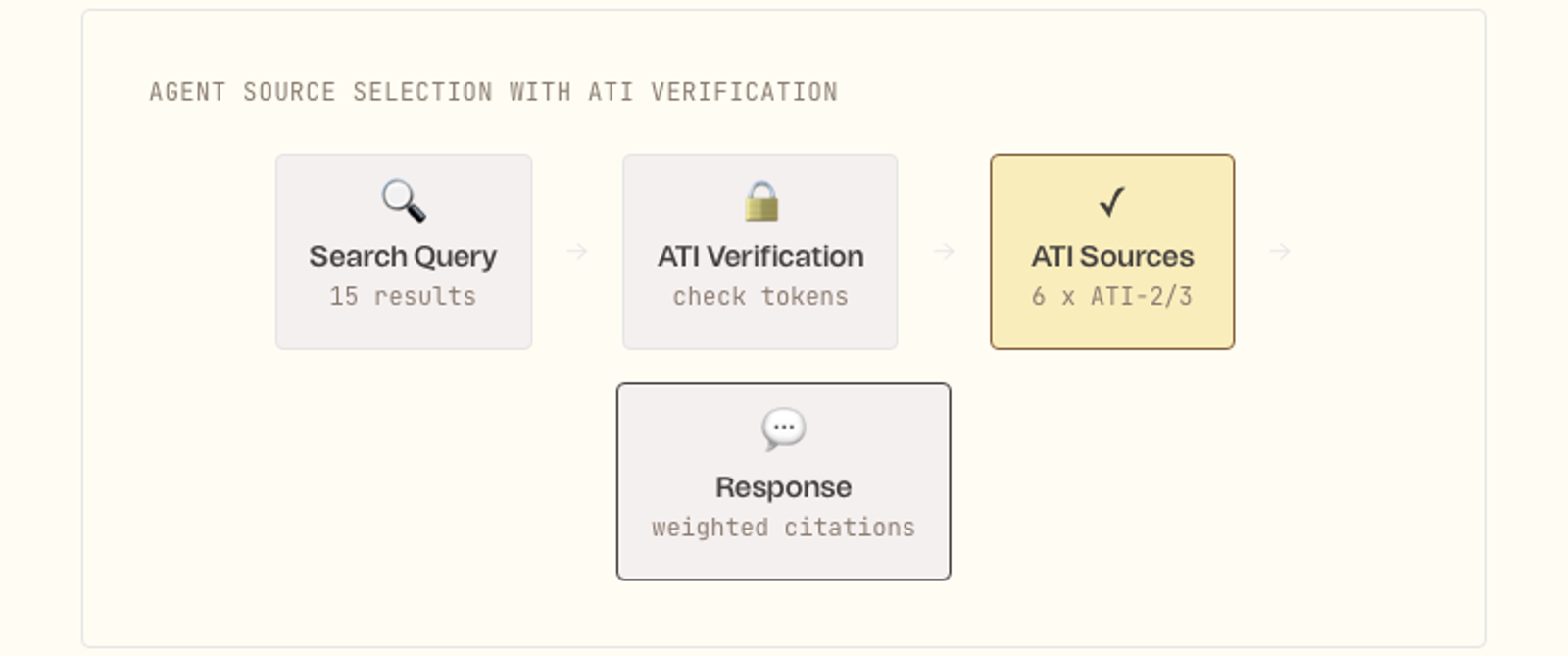

How AI Agents Use It

Today, when an AI agent searches the web and selects sources to cite, it has no standardised way to assess trustworthiness. With ATI certification, agents gain a machine-readable trust signal that can be verified in real time.

The agent can weight ATI-3 sources more heavily than ATI-1, require a minimum tier for sensitive query types (health, financial, legal), and stop treating a source as verified the moment its certification is revoked.

What This Doesn’t Replace

ATI certification doesn’t replace the agent’s own reasoning about source quality. It provides a verified baseline — a trust floor — that the agent builds on. Certified sources can still publish content that is wrong. The certification signals that there are processes in place to catch and correct that.

Landscape

ATI sits in a gap between existing approaches. C2PA proves provenance. E-E-A-T assesses authority opaquely. B Corp certifies business practices. None certify editorial trustworthiness for AI consumption.

Governance

The framework requires an independent ATI Authority that sets and maintains the standards, accredits assessors, maintains the public registry, and handles complaints and revocation. The Authority does not perform certifications itself — it accredits independent organisations, mirroring the CA model in SSL and the assessor model in B Corp.

Learning from B Corp. The Authority cannot be funded primarily by the entities it certifies. Standards are published, versioned, and subject to public consultation. Governance, funding, and decision-making are transparent. The certification is designed to be hard to get and easy to lose.

What This Is Not

Not a content rating system. ATI does not assess whether specific claims are true or false. It assesses whether the publishing entity has the processes, accountability, and transparency to be treated as a trustworthy source.

Not a barrier to the open web. Uncertified content remains accessible, indexable, and citable. The framework creates a positive signal for verified sources, not a penalty for unverified ones.

Not SEO. The certification is not designed to improve search rankings. It provides a machine-readable trust signal for AI agents. Whether search engines incorporate it is a separate decision.

Not a replacement for editorial judgement. Certified sources can still publish content that is wrong, biased, or misleading. The certification signals that processes exist to catch and correct that — not that errors will never happen.

Next Steps

This document is a starting point. The framework needs a detailed technical specification for the token format, verification protocol, and revocation mechanism. It needs pilot standards for ATI-1 and ATI-2 developed with publishers, AI developers, and trust researchers. And it needs a small-scale pilot with willing publishers and at least one AI system to test the certification flow end-to-end.

The web is becoming the evidence base for AI reasoning at a civilisational scale. The question of which sources can be trusted is no longer academic. It’s infrastructural.

And right now, the infrastructure doesn’t exist.